TRIOPTICS brings the complex driver assistance systems of the automotive industry onto the road. We support manufacturers and suppliers in their task of ensuring the quality, longevity and sustainability of

their systems. Our system solutions for active alignment and assembly enable the mass production of camera modules with appropriate testing technology. Get to know some of our innovations, which are

extremely important for autonomous vehicles. Make the change.

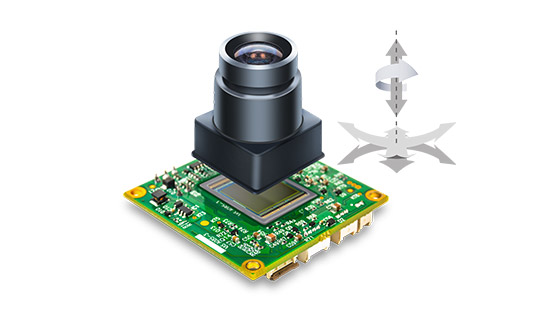

Even with a perfect lens system, the image quality of a complete camera deteriorates if the lens is poorly aligned with the sensor. This effect is amplified with the increasing performance of ADAS camera modules and the higher resolution of sensors. For this reason, the high-precision active alignment of optics and sensor is necessary in the production of camera modules. With our ProCam® technology, the sensor is aligned with the camera optics in up to six degrees of freedom with sub-micron/sub-arcmin resolution. The components are focused, centered, tilt adjusted and rotated in one single alignment step.

The use of complex camera systems for safety-related and automated object recognition and classification leads to higher demands on the characterization of the image quality of ADAS camera modules. This means that the entire test chain must also meet these new requirements. For this purpose, TRIOPTICS offers end-of-line test systems with CamTest which, in addition to the usual optical and opto-mechanical parameters such as MTF, SFR, defocus, tilt and rotation of the image plane, also covers additional sensor test parameters. These include OECF, dynamic range, white balance, relative illumination, spectral response and more.

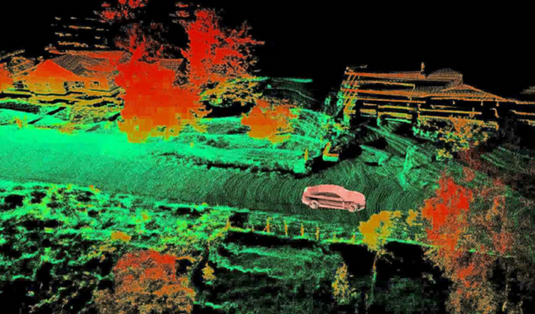

There is one thing that Flash-LiDAR, solid-state LiDAR or mechanical LiDAR scanning systems certainly have in common:

The interaction of the optics and sensor chip of the emitter and receiver unit of the LiDAR sensor must be absolutely precise and perfectly aligned.

If the components are not precisely positioned, objects and distances cannot be reliably detected. In the worst case, this could compromise the safety of autonomous vehicles.

Accordingly, perfect performance must be ensured from the first sample to the series product. We are happy to support you in choosing the right production method

and manufacture the important first LiDAR prototypes together with you.

Advanced Driver Assistance System (ADAS) technologies increase the demand for high-quality machine vision systems and optical sensors. As these technologies mature, imaging systems are required to enable better optical performance. Reduction in pixel size and an increase in pixel density of modern image sensors make it difficult to align optical lenses and sensors with the required precision. This presentation describes the latest achievements in actively aligning a lens and camera sensor for optimal image quality. The theoretical limits of accuracies determined by the physical properties of lens and sensor are compared with examples from practice.

The presentation outlines the early development of processes for passive and active alignment as well as for testing of the optical systems. Using the same metrology, the same control algorithms and the same software tools from prototyping, the scaling up for series production could be ensured without changing the technology or the basic equipment.

How to choose the best optical assembly method depending on the LiDAR type? TRIOPTICS classifies the alignment of LiDAR components into two different methods: active and passive alignment. Which is the best method depends on the customer-specific LiDAR system.

Active Alignment of LiDAR works in a similar way to Active Alignment of cameras. Typical features of the Active Alignment method for LiDAR:

The laser is activated and/or the receiver is switched on for image acquisition

The alignment is done by detecting the laser beam pattern or by image processing of the

receiver signal

The term refers to a process that uses inactive (passive) components of the LiDAR sensor.

Typical features of the Passive Alignment method for LiDAR:

The Laser and/or the receiver are not powered up

The alignment is done using visible structures or special markings as reference

Dr. Dirk Seebaum| Automotive expert at TRIOPTICS

In the automotive sector, cameras have been in use for years, e.g. for rear view cameras. Recently, new applications have been added to the range of applications, while at the same time the demands on image quality and image processing have increased. Vision technologies in the automotive sector are therefore a growth market with constantly increasing quality requirements.

Rear view and front view cameras are often already standard equipment in motor vehicles. Newer safety-related systems in which cameras and thus image processing are used include lane departure warning, lane change assistant, traffic sign recognition, blind spot monitoring, night vision infrared and emergency braking system for pedestrian protection. Wherever cameras increase the safety of road users and provide drivers with additional information, there is great potential for the use of camera systems.

Particularly, vision technologies are used in the field of driver assistance systems (ADAS). ADAS are electronic auxiliary devices in motor vehicles to support the driver in certain driving situations. Safety aspects, but also the increase of driving comfort are often in the foreground. The vision systems perform the main tasks and are often supported by other technologies such as radar or LiDAR.

The use of vision technologies in the automotive sector places special demands on cameras: The desire for a wide image field, e.g. for Surround View, causes strong image distortions that have to be calibrated. In addition, the camera system must be able to cover a wide dynamic range of intensity, for example to detect lane markings in a dark tunnel with a view of the tunnel exit. In addition, the camera system must be robust against vibrations, strong temperature fluctuations and dirt. These are just a few of the challenges that need to be considered during design, assembly and testing.

An essential challenge lies in the increasing demands on the quality of the images. Higher resolutions require larger pixel numbers, often accompanied by smaller pixel dimensions and decreasing depth of field of the lenses. The systems for aligning and testing the cameras must also meet this challenge. Accordingly, TRIOPTICS systems are becoming more and more precise in the optical measuring equipment and in the accuracy of the mechanical systems. The typical requirements of the automotive industry for optimum productivity and availability can only be met with reliable system technology and fast processes. The algorithms for controlling and monitoring the systems are constantly being further developed for this purpose. The use and analysis of data now plays a major role here. On the one hand, to provide information for the traceability of products and for integration into higher-level production systems, on the other hand, to create additional advantages through optimal cycle times and reduced plant downtimes through intelligent and foresighted processes in line with Industry 4.0.

TRIOPTICS product groups ProCam® and CamTest include a variety of different devices for active alignment, assembly and testing of high-resolution camera modules and other optical sensor systems. Especially in the field of automotive safety-related applications, it is necessary that the cameras used deliver reliable and optimal image quality. This is ensured by the active alignment of lens and sensor to each other and extensive test procedures. TRIOPTICS works permanently on the further development of these technologies and cooperates with important manufacturers of leading driver assistance systems (ADAS).